Inspired by an invitation to present on the topic to politicians in Madrid who apparently want to stop the practice of acupuncture by doctors in Spain – 18 January 2019.

I have been lecturing on the scientific perspectives of acupuncture around the globe for over two decades. I have numerous PowerPoint slides to call upon, but new data is always available… I should have gone for history rather than science with hindsight ;-).

Whilst creating a new presentation out of one I had used in a conference only months before I came to add a very old slide that comes with a story.

The theme is bias and it intertwines with the conquering of healthcare by evidence based medicine (EBM) in the early 90’s.[1]

Some readers may remember an EBM publication called Bandolier. It was rather like a newsletter that dissected the data supporting different healthcare practices, and it was sent out to all GPs in the land, care of handsome funding from the Department of Health. It was written and edited by senior characters from the Pain Research Unit in Oxford, who probably were too important to require external peer review :D.

The Bandolier Boys (BB), as I like to refer to them, were particularly dismissive of all CAM therapies. A typical quote on the evidence for a therapy might be: “…and that doesn’t stack up to a row of beans”, or some such dismissive rejoiner.

On publication of a leaflet attempting to explain ‘Bias’ to the EBM-ignorant GP masses of the era. The BB chose to use acupuncture trials to illustrate their points. Clearly this got my attention, and I could not stop myself responding.

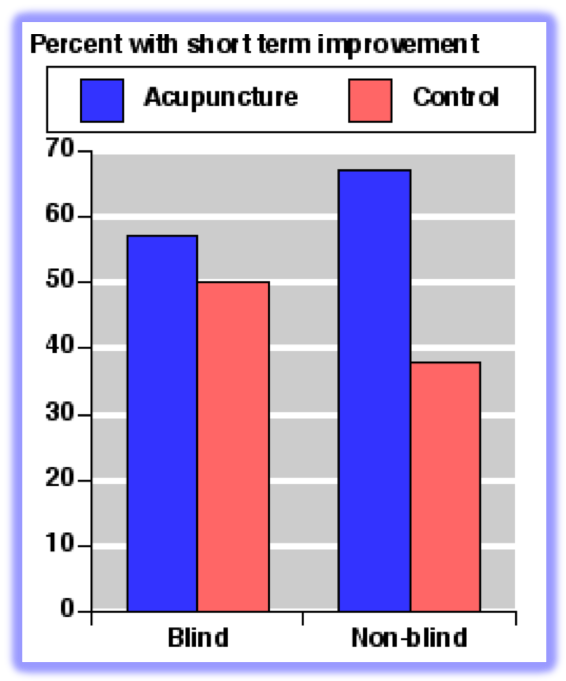

An infamous bar chart illustrating the bias related to a lack of blinding in RCTs was produced using data from a systematic review (SR) they (the BB team) performed on acupuncture for back and neck pain.[2,3]

As you can see from the bar chart there is a big difference between acupuncture and control in unblinded studies, but almost no difference in the better quality, blinded ones. This led to the conclusion by the BB that the apparent effectiveness of acupuncture was entirely related to the [detection] bias inherent in unblinded trials. So what exactly is detection bias? Well the Cochrane handbook for Systematic Reviews of Interventions says:

- Detection bias is systematic differences between groups in how outcomes are determined.

- Blinding of outcome assessors can be especially important for assessment of subjective outcomes, such as degree of postoperative pain.

- Blinding (or masking) of outcome assessors may reduce the risk that knowledge of which intervention was received, rather than the intervention itself, affects outcome measurement.

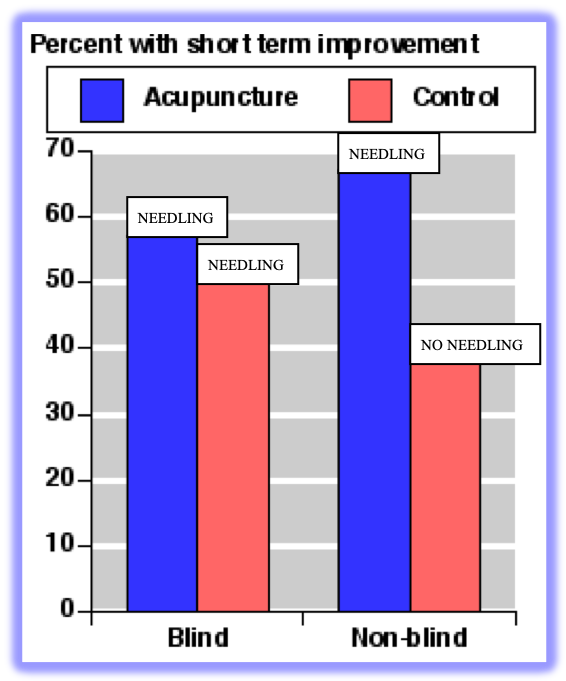

What the BB apparently did not consider was the difference between the interventions administered in the control groups of blinded and unblinded trials of acupuncture. In their SR all the blinded subjects in control groups of acupuncture trials were needled, and in the unblinded trials they were not needled. So all the columns in the bar chart at 50% or above were given gentle or standard needling, and the column below 40% was not given any needling, since you do not need to needle the control group in an unblinded trial of acupuncture.

I wrote to the BB when this leaflet on bias was circulated to GPs. My contention was that the effect in the control group of the blinded studies of acupuncture was big enough [50%] to be equate to interventions that worked in chronic pain [according to a book from the BB], and since it was a ‘placebo’ it must me harmless. So I said we should use that in clinical practice.

The response was: “You’re missing the point sonny! They [the subjects in the control group of blinded acupuncture trials] would have got better on their own.”

I could not argue with that pure EBM response, so I had to wait.

Luck would have it that one of the BB was invited to speak at an event in the Royal Institution alongside me in front of a lay audience of 200 or more in the new pink seats of their famous steeply tiered lecture theatre. The same lecture theatre that is home to the famous ‘Christmas Lectures’ and originally the scientific demonstrations of Michael Faraday et al.

I was appalled to see the same old bar chart on bias wheeled out, so took my opportunity to call it back and relate my tale in communicating with the BB and their response. I added the ‘sonny’ and possibly an extra ‘row of beans’ [that acupuncture doesn’t add up to ;-)]. The audience were rolling in the aisles when I played my coup de grâce by explaining that the subjects in the 3 tallest columns were all needled, and the subjects in the shorter column were not.

Hearing the audience reaction immediately relieved my chronic frustration at the blind EBM approach to acupuncture research. But on returning to my seat the BB guy leaned over and said: “Are you sure they were all needled in the control groups?”.

My response [at least the one in my head that I wanted to say] was: “You did the systematic review. Didn’t you read the methods section of the trials?”

But probably I just said: “Yes, I’m sure… I read the methods sections of acupuncture trials when I do SRs…”

References

- Evidence-Based Medicine Working Group. Evidence-based medicine. A new approach to teaching the practice of medicine. JAMA 1992;268:2420–5. doi:10.1001/jama.1992.03490170092032

- Smith LA, Oldman AD, McQuay HJ, et al. Teasing apart quality and validity in systematic reviews: an example from acupuncture trials in chronic neck and back pain. Pain 2000;86:119–32.

- Cummings TM. Teasing apart the quality and validity in systematic reviews of acupuncture. Acupunct Med 2000;18:104–7.

You must be logged in to post a comment.